We customised an object detection model for reading analogue meters.

We established an AI development and batch inference pipeline on Databricks.

We set up automatic data ingestion from a unique low-power camera system.

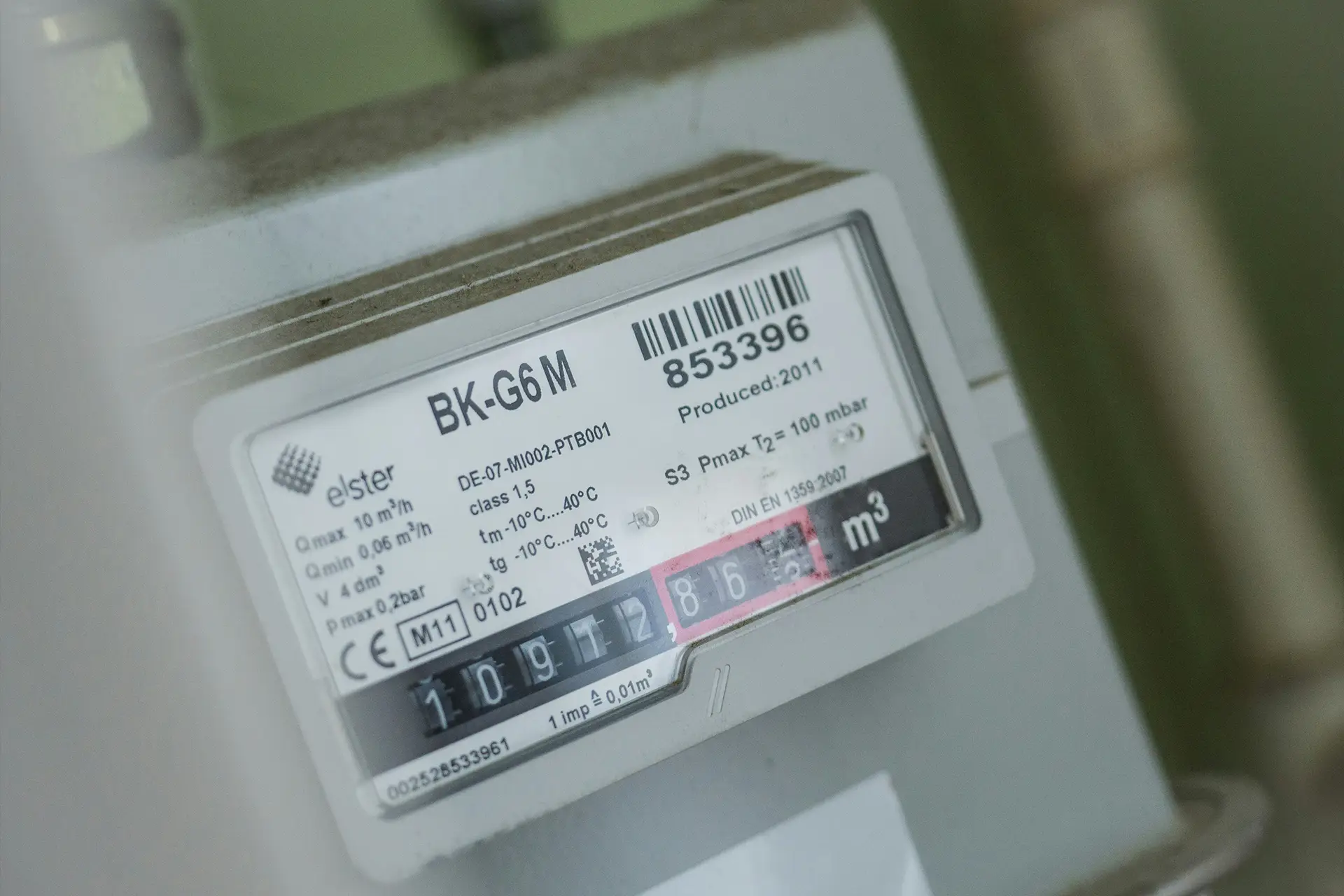

Our client is a European technology company that provides an energy monitoring platform for organisations with multiple buildings. Many of their clients still use traditional analogue water and gas meters, which are costly to replace. To bridge this gap, our client developed a clever low-power camera system that photographs these meters remotely.

However, the system's low-power design created a data hurdle: the cameras produce challenging, lower-quality images taken from an angle. Standard automated reading tools like OCR couldn't reliably extract the meter values from this visual data. The client approached us to create a custom AI solution capable of accurately interpreting these specific images for their platform.

Our client can now automatically and reliably read analogue water and gas meters from challenging camera images, removing the need for costly manual processes and site visits. Meter data flows directly into their platform, with the AI model consistently tracking readings and handling image quality issues and digit ambiguities without operator input.

As a result, our client saves significant time and operational expense, while their end customers benefit from up-to-date, accurate consumption insights and a scalable system ready for thousands of devices.

We partnered with

Fueled by

Our goal for this project was clear: we needed to deliver a working proof-of-concept (PoC) fast, but it also had to be built on a solid foundation that could support future growth.

The client already used Microsoft Azure for their operations. Which made the decision simple: we would build the solution within their existing environment. This approach kept things straightforward and made cost tracking easier.

We drew on our experience with integrated machine learning platforms and recommended Databricks. This gave them a unified workspace where they could handle data, finetune models, manage training, and track everything using MLflow and Unity Catalog. This setup significantly sped up the development cycles, which is especially convenient when trying out proofs of concept.

The deep domain knowledge and proactiveness of the client's team were major reasons for the project's success. For example, our first analysis showed that the raw images needed perspective correction before we could use them effectively. Their experts immediately took on this pre-processing task themselves. They also handled the critical job of labelling the image data, which was essential for training the AI model.

This high level of client involvement meant that we could concentrate entirely on the AI system itself. The project moved forward much faster as a result. A truly streamlined way of working together!

We ran some early tests, which quickly confirmed that standard OCR techniques wouldn't work well enough on these images. The low quality, the angled camera perspective, and the way digits transition on analogue meters (sometimes showing parts of two numbers at once) were too challenging. So, we decided on a different path: object detection using the well-established YOLO (You Only Look Once) model.

We specifically trained YOLO for the task of detecting the digits 0 through 9 within the meter images. This method turned out to be much better suited for several reasons:

Training the model was (as always with AI and ML) an iterative cycle. We started with the labelled data and trained the initial models. The client then evaluated the performance by plotting the predicted readings in their dashboard and visually checked for any strange results or anomalies. They then supplied new labelled data, which we used to retrain the model. With each cycle, the model's accuracy steadily improved.

For the PoC, we set up a straightforward batch processing pipeline:

The client then takes this raw output from the database and applies their own post-processing logic which assembles the final meter reading. By using the location and confidence data from our model, they can interpolate missing digits or handle uncertain detections based on their domain expertise.

This first phase focused on proving the concept successfully for one specific meter type in a controlled setting. However, the architecture we chose and the collaborative way we worked together provide a strong foundation for what comes next.

We've already discussed future steps with the client. These include strategies for handling various other analogue meter types, perhaps by training specific models for each type or by fine-tuning a general model. We also talked about creating an onboarding process for new meters, as they prepare to scale its camera deployment potentially to thousands of units.

Looking for a custom AI solution that turns complex input into valuable data? Contact us and we'll gladly help you out!